Series: The Sequentia Lectures: Unlocking the Math of AI

Part 4: The AI Toolkit: Probability & Statistics

Lecture 35: Correlation vs. Causation: The Trap Most AIs (and Humans) Fall Into

In our journey through the math of AI, we’ve focused on a central theme: finding patterns. Our models are expert pattern-finders. They sift through vast landscapes of data to discover relationships and connections. The statistical term for such a relationship is correlation.

But this leads us to one of the most famous and critical warnings in all of data science: “Correlation does not imply causation.”

Understanding this distinction is absolutely essential for interpreting the results of any AI model and for avoiding a trap that both humans and machines fall into with surprising regularity.

What is Correlation?

Correlation is a statistical measure that describes a relationship between two variables. It tells us that as one variable changes, the other tends to change in a predictable way.

- Positive Correlation: As variable A increases, variable B also tends to increase. (e.g., There is a positive correlation between ice cream sales and temperature).

- Negative Correlation: As variable A increases, variable B tends to decrease. (e.g., There is a negative correlation between the number of hours spent practicing and the number of mistakes made).

- No Correlation: The two variables show no discernible relationship.

Machine learning models, especially supervised learning models, are masters at detecting correlation. They learn that a certain pattern of inputs (x) is highly correlated with a certain output (y).

The Causation Trap: The Ice Cream and Shark Attacks

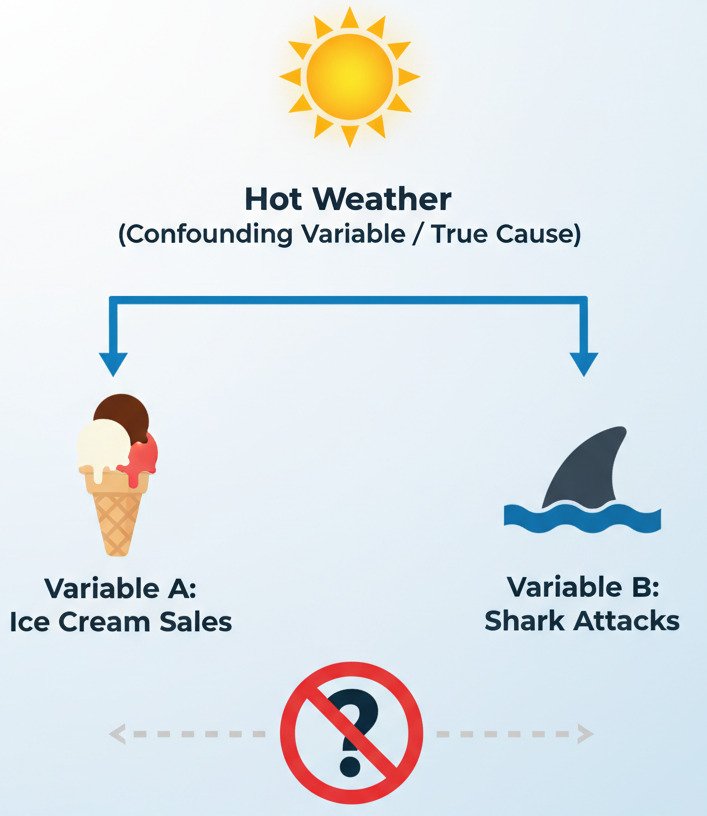

Here’s the classic example: if you analyze data from a beach town, you will find a strong positive correlation between the daily sales of ice cream and the number of shark attacks.

A naive model (or person) looking only at this correlation might conclude: “Eating ice cream causes shark attacks!” Or perhaps, “Shark attacks cause people to buy ice cream!”

Both conclusions are, of course, absurd. The correlation is real, but the causal link is false. The two variables are not causing each other. Instead, they are both being caused by a third, unobserved variable, often called a confounding variable or lurking variable.

In this case, the confounding variable is hot weather.

- Hot weather causes more people to go to the beach and buy ice cream.

- Hot weather also causes more people to go swimming, which increases the chance of a shark encounter.

The ice cream sales and shark attacks move together, but neither one is causing the other. They are both effects of the same cause.

Why AI Models Don’t Understand “Why”

A standard AI model doesn’t have a built-in understanding of the real world, physics, or common sense. Its entire world is the numerical data it was trained on. It is an expert correlation-finding machine.

- If a medical AI is trained on data where, by chance, patients with a certain disease also happened to be treated more often in a specific hospital, the model might learn to associate the hospital with the disease, not the underlying biological factors. It finds the correlation, not the cause.

- If an image classifier is trained on photos of cows that are mostly in grassy fields, it might learn that “green background” is a very strong feature for identifying a “cow.” It might then fail to recognize a cow on a beach.

The model doesn’t understand why certain features are predictive; it only knows that, in the data it has seen, they are predictive. It learns the “what” (the correlation), not the “why” (the causation).

The Frontier of Causal Inference

This limitation is one of the biggest challenges in modern AI research. A whole field called Causal Inference is dedicated to developing methods that can try to move beyond simple correlation and begin to understand cause-and-effect relationships from data. This often involves designing clever experiments, incorporating domain knowledge, and using more advanced statistical techniques.

For now, it’s crucial to remember that while our AI models are incredibly powerful tools for finding patterns, the responsibility for interpreting those patterns and distinguishing between a mere correlation and a true causal link still rests firmly with us, the human users. Always ask “why” might this pattern exist, and be on the lookout for those lurking, confounding variables.

This concludes Part 4 of our series! We’ve covered the essentials of probability and statistics, from quantifying uncertainty to the pitfalls of interpretation. We are now ready to put everything we’ve learned—Linear Algebra, Calculus, and Statistics—into action by building our first real model.