Series: The Sequentia Lectures: Unlocking the Math of AI

Part 2: The AI Toolkit: Linear Algebra

Lecture 8: The Dot Product: AI’s Universal “Similarity” and “Relevance” Detector

We’ve met the building blocks of AI—vectors and matrices. Now, let’s learn our first fundamental operation: the dot product. On the surface, the calculation is simple, but its meaning is incredibly profound. The dot product is AI’s primary tool for measuring similarity, alignment, and relevance.

To understand it, we need to look at it from two different perspectives: the algebraic calculation and the geometric intuition.

The Algebraic Perspective: A Weighted Sum

Let’s say we have two vectors of the same length, A and B.

Vector A = [a₁, a₂, a₃]

Vector B = [b₁, b₂, b₃]

The dot product, often written as A · B, is calculated by multiplying the corresponding elements of each vector and then adding all the results together.

A · B = (a₁ * b₁) + (a₂ * b₂) + (a₃ * b₃)

It’s a simple “multiply-and-sum” operation. The result is not another vector, but a single number (a “scalar”).

But what does this calculation mean? Think of it as a weighted sum. It’s a way to calculate an overall score by weighing the importance of different features.

Analogy: Calculating a Test Score

Imagine a student’s performance on three parts of a test is represented by a Scores vector:

Scores = [Correct Answers: 80, Essay Quality: 7, Time Taken: 90] (where time taken is in minutes, and lower is better).

Now, let’s define a Weights vector that represents how we want to score the test. We value correct answers and essay quality, and we want to penalize a long time. So our weights might be:

Weights = [Points per Answer: 2, Points per Essay Point: 5, Penalty per Minute: -0.5]

The final score is the dot product of these two vectors:

Score = Scores · Weights

Score = (80 * 2) + (7 * 5) + (90 * -0.5)

Score = 160 + 35 – 45 = 150

The dot product provided a single, meaningful score by combining multiple features, each with a different level of importance. This is exactly how the first layer of a simple neural network works! It takes an input vector (like the pixels of an image) and a weights vector (what the AI has learned is important), calculates the dot product, and gets a score that represents how “relevant” the input is to a particular category.

The Geometric Perspective: A Measure of Alignment

Now for the beautiful geometric intuition. In our “data landscape,” where vectors are points or arrows from the origin, the dot product tells us how much two vectors are pointing in the same direction.

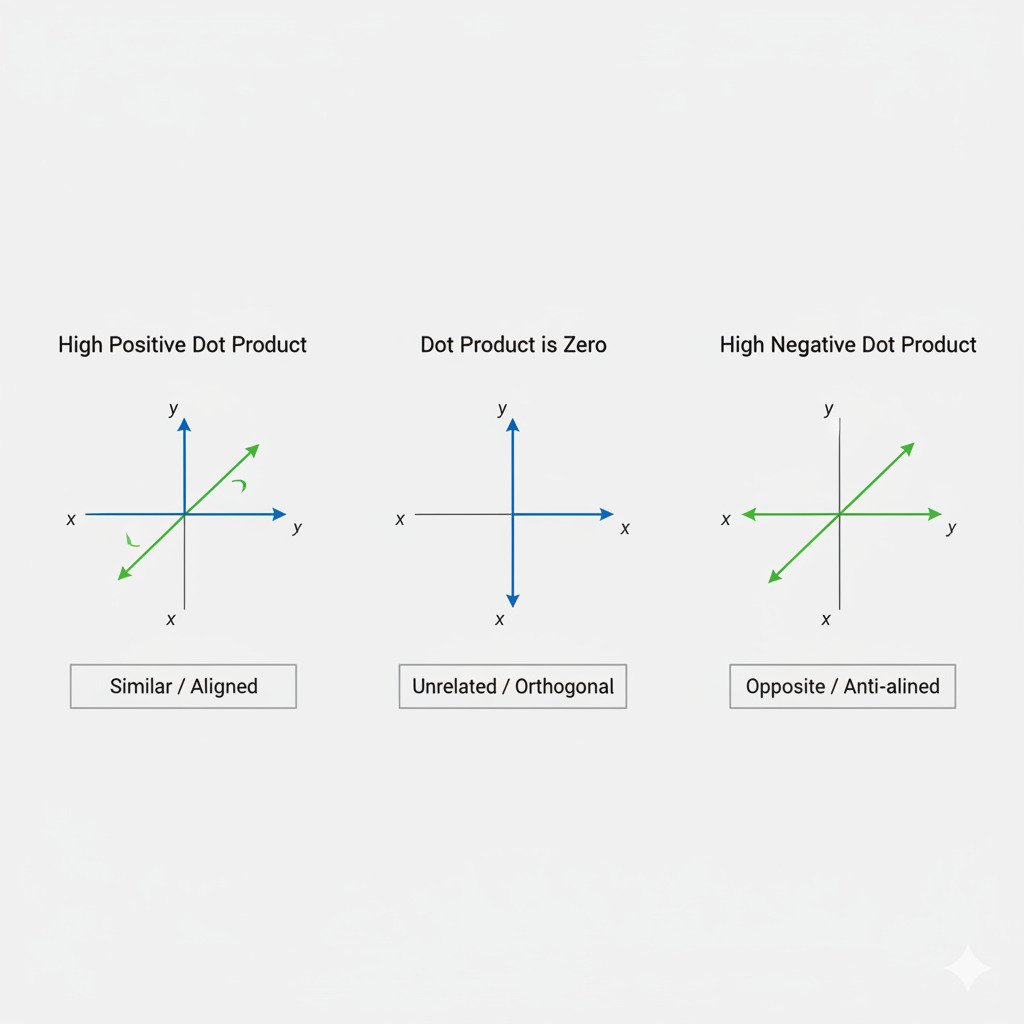

Let’s visualize two vectors, A and B, in a 2D space.

- Scenario 1: High Positive Dot Product

If vectors A and B point in very similar directions, their dot product will be a large positive number. They are highly aligned. In AI terms, this means they are very similar. For example, the word vectors for “cat” and “kitten” would have a high positive dot product. - Scenario 2: Dot Product is Zero

If vectors A and B are at a 90-degree angle (orthogonal) to each other, their dot product will be exactly zero. They have no alignment. In AI terms, they are unrelated or “independent.” The word vectors for “cat” and “democracy” might have a dot product close to zero. - Scenario 3: High Negative Dot Product

If vectors A and B point in opposite directions, their dot product will be a large negative number. They are anti-aligned. In AI terms, they represent opposite concepts. The word vectors for “hot” and “cold” would have a high negative dot product.

Mathematically, the dot product is also related to the angle θ between the two vectors: A · B = |A| * |B| * cos(θ). This formula confirms our intuition: when the angle is 0°, cos(θ) is 1 (maximum alignment); when the angle is 90°, cos(θ) is 0 (no alignment); and when the angle is 180°, cos(θ) is -1 (maximum anti-alignment).

The Universal Detector

By combining these two perspectives, we see the true power of the dot product:

- Algebraically, it acts as a “relevance engine,” calculating a weighted score.

- Geometrically, it acts as a “similarity detector,” measuring the alignment of concepts.

This one simple operation is the workhorse behind countless AI applications. It’s used in recommendation systems to find users with similar tastes, in search engines to find documents relevant to a query, and in every neuron of a neural network to weigh the importance of incoming signals.

Mastering the dot product is mastering the fundamental calculation of relevance in the world of AI. In our next lecture, we’ll see how this operation is a key part of matrix multiplication.