Series: The Sequentia Lectures: Unlocking the Math of AI

Part 2: The AI Toolkit: Linear Algebra

Lecture 7: Matrices: The Organizers of Data and Transformations

In our last lecture, we introduced the fundamental building block of AI: the vector, an ordered list of numbers representing a single data point. We learned to think of a vector as a single point in a vast data landscape.

But AI rarely works with just one data point. It learns from thousands or millions of them. How do we organize all these vectors? The answer is the second essential tool in our linear algebra toolkit: the matrix.

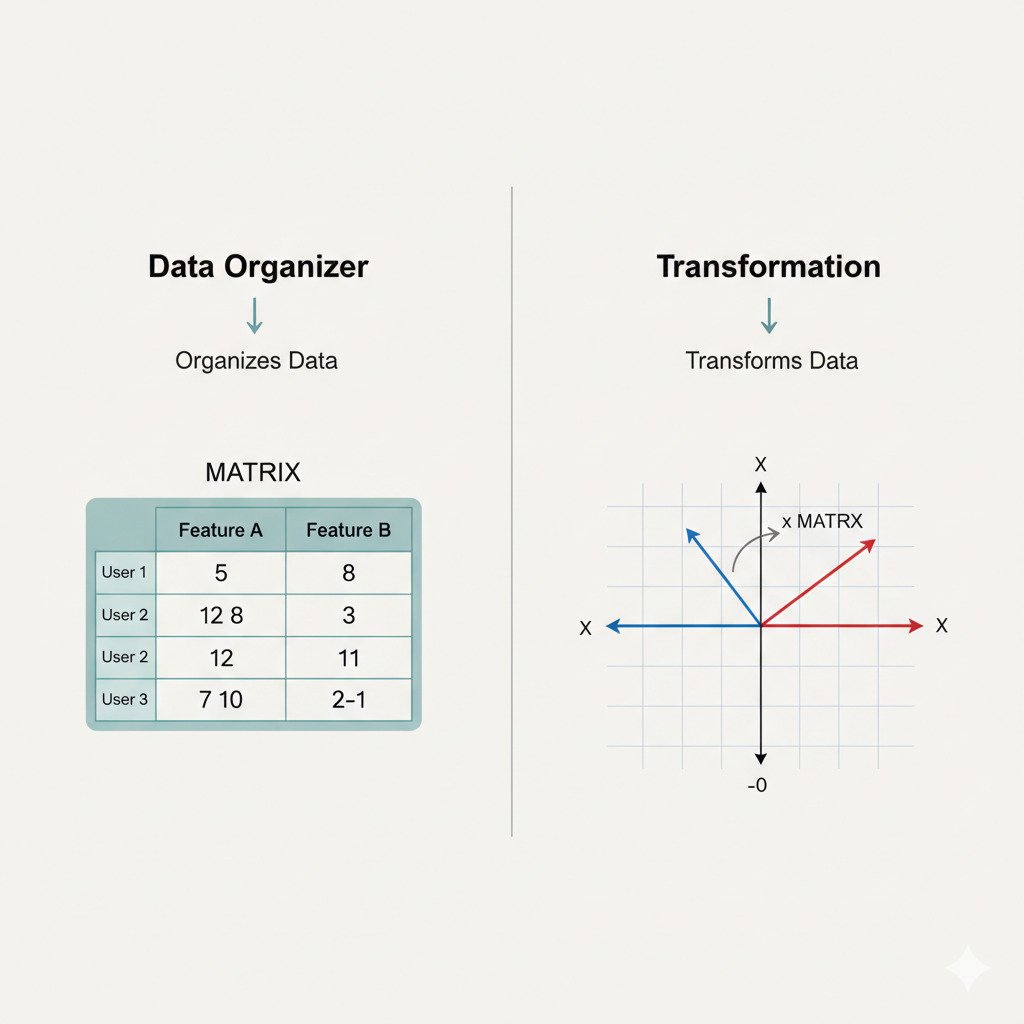

A Matrix as a Spreadsheet of Data

At its core, a matrix is simply a rectangular grid of numbers, arranged in rows and columns. If you’ve ever used a spreadsheet like Excel or Google Sheets, you already have an intuitive understanding of a matrix.

This grid structure is perfect for organizing an entire dataset. We follow a simple convention:

- Rows represent individual data points (our vectors).

- Columns represent the different features of that data.

Let’s go back to our movie ratings example. Imagine we have ratings from three different users:

- User 1 (You): [5, 5, 3]

- User 2 (Alice): [4, 5, 2]

- User 3 (Bob): [2, 1, 5]

Instead of three separate vectors, we can organize them into a single matrix. Each user’s vector becomes a row:

[ The Matrix | Inception | Toy Story ]User1[ 5 5 3 ]User2[ 4 5 2 ]User3[ 2 1 5 ]

This is a 3×3 matrix (3 rows, 3 columns). Now, our entire dataset is a single, neat mathematical object. Similarly, a dataset of 1,000 images, each with 784 pixels (features), could be represented as a massive 1000×784 matrix. A matrix is the container for our collection of vectors.

The Second Role of Matrices: Actions and Transformations

Organizing data is just the beginning. The truly powerful and mind-bending role of a matrix in AI is to represent an action or a transformation. A matrix can be thought of as a recipe for manipulating our vectors.

When we multiply a vector by a special “transformation matrix,” we’re not just getting a new number; we’re getting a new vector. We are effectively moving our data point to a new location in the landscape. What kind of moves can a matrix perform?

- Scaling: A matrix can stretch or shrink a vector, making it longer or shorter.

- Rotation: A matrix can rotate a vector around the origin, changing its direction.

- Shearing/Skewing: A matrix can tilt or slant a vector.

- Projection: A matrix can project a vector from a higher dimension to a lower one, like casting a 3D object’s shadow onto a 2D wall.

(AI Image Prompt: See below – an image showing a vector being transformed by a matrix.)

Imagine our data landscape. Applying a transformation matrix to every data point in a cluster would be like picking up the entire cluster of points and rotating, stretching, or moving it to a new position in the landscape.

Why is this Transformation so Important?

This is the secret behind how neural networks “think.” A neural network is essentially a series of layers, and each layer is, at its core, a transformation matrix.

When you feed an input vector (like an image) into a neural network, it goes through a sequence of matrix multiplications.

- Layer 1 (a matrix) rotates and stretches the input vector.

- Layer 2 (another matrix) takes that transformed vector and rotates and stretches it again.

- This continues through all the layers.

The “learning” process in a neural network is all about finding the perfect set of transformation matrices. The network learns the exact sequence of rotations, stretches, and skews needed to move the input data points (e.g., all “cat” image vectors) into a specific region of the landscape where they can be easily classified as “cats.”

So, a matrix has a dual personality:

- As a “spreadsheet,” it organizes our static collection of data points.

- As a “transformation,” it acts as a dynamic verb, an action that reshapes our data landscape.

Understanding this dual role is crucial. In our next lectures, we’ll explore the mechanics of how these matrix operations, like multiplication, actually work.