Series: The Sequentia Lectures: Unlocking the Math of AI

Part 2: The AI Toolkit: Linear Algebra

Lecture 13: Eigenvectors & Eigenvalues: Finding the ‘Most Important’ Directions in Data

The terms eigenvector and eigenvalue might be among the most intimidating in all of linear algebra. But beneath the complex name lies a beautiful and surprisingly intuitive idea that is fundamental to how AI finds the “most important” patterns in a dataset.

Let’s demystify these concepts by thinking about transformations.

The Special Vectors That Don’t Change Direction

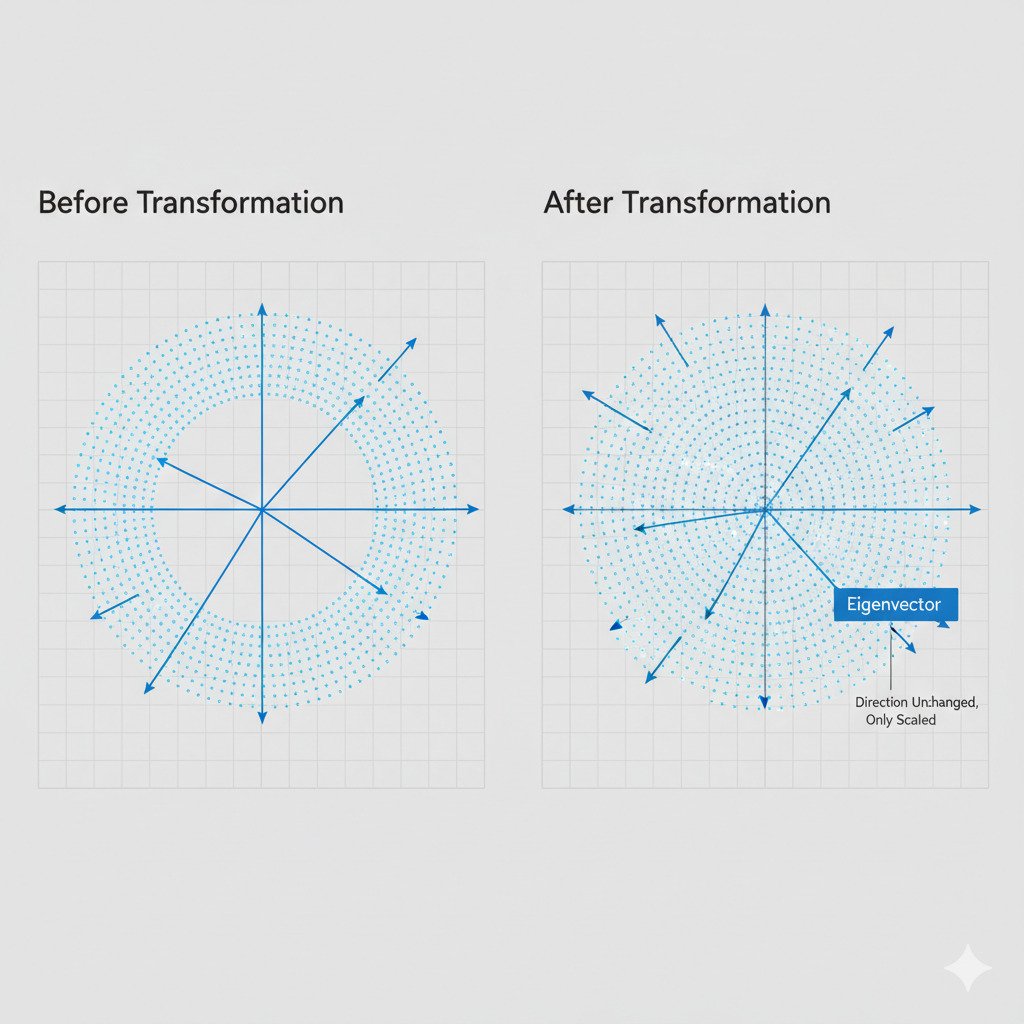

Remember from our lecture on matrices that a matrix can represent a transformation—an action like a rotation, a stretch, or a shear that changes vectors. When you apply a transformation matrix to most vectors, they get knocked off their original path; their direction changes.

Imagine a square grid of points on a 2D plane. If we apply a “shear” transformation, the whole grid tilts. Nearly every vector pointing from the origin to a point on the grid will be pointing in a new direction after the transformation.

But there are always a few special vectors. Even amidst all this twisting and warping, these special vectors do not change their direction at all. They might get longer, they might get shorter, or they might flip to point the opposite way, but they stay on their original line through the origin.

These special, directionally-stable vectors are the eigenvectors of that transformation.

- An eigenvector of a matrix is a non-zero vector that, when the matrix’s transformation is applied, only changes in scale (it gets stretched or shrunk), not in direction.

Eigenvalues: The “Stretching Factor”

So, an eigenvector keeps its direction. But how much does it get stretched? That’s what the eigenvalue tells us.

Each eigenvector has a corresponding eigenvalue, which is a simple number that represents the scaling factor.

- If the eigenvalue is 2, the eigenvector is stretched to twice its original length.

- If the eigenvalue is 0.5, the eigenvector is shrunk to half its length.

- If the eigenvalue is -1, the eigenvector is flipped and points in the exact opposite direction, but remains on the same line.

The relationship can be summarized by this core equation:

A * v = λ * v

Where:

- A is the transformation matrix.

- v is the eigenvector.

- λ (the Greek letter lambda) is the eigenvalue (a scalar/number).

This equation elegantly states: “Applying the transformation A to the special vector v has the same effect as simply scaling v by the amount λ.”

Why This is Crucial for AI: Finding the Principal Components

This is all very interesting, but what does it have to do with AI and finding patterns in data?

In data science, we often have a “cloud” of data points—our data landscape. This cloud has a shape. It might be a circular blob, or it might be stretched out into an ellipse, like a rugby ball. The directions along which this data cloud is most “stretched out” are the directions of highest variance—the directions where the data changes the most. These directions are the most informative; they represent the most important patterns or relationships in the data.

It turns out that these directions of highest variance correspond exactly to the eigenvectors of a special matrix derived from our data (the “covariance matrix”).

- The eigenvector with the largest eigenvalue points in the direction of the most significant pattern in the data (the longest axis of our data cloud).

- The second-largest eigenvalue’s eigenvector points in the next most significant direction, and so on.

This process, called Principal Component Analysis (PCA), is a cornerstone of machine learning. By calculating the eigenvectors and eigenvalues of our dataset, we can:

- Find the “Most Important” Features: We discover the underlying “principal components” that capture the most information about our data.

- Reduce Dimensionality: If we have 100 features but find that the first 3 eigenvectors account for 95% of the data’s variance, we can simplify our problem. We can project our 100-dimensional data down onto these 3 key directions (eigenvectors) without losing much information, making our models faster and sometimes more accurate.

- Visualize High-Dimensional Data: We can plot our complex data along its two or three most important principal components (eigenvectors) to see its underlying structure on a simple 2D or 3D graph.

Eigenvectors and eigenvalues, therefore, give us a mathematical way to ask our data, “What are your most important, fundamental patterns?” They allow us to distill the signal from the noise and find the stable axes that define the very shape of our data landscape.