Series: The Sequentia Lectures: Unlocking the Math of AI

Part 4: The AI Toolkit: Probability & Statistics

Lecture 26: Probability 101: Quantifying Belief and Uncertainty

Welcome to Part 4 of the Sequentia Lectures! We’ve spent the last two sections building a powerful toolkit from Linear Algebra and Calculus. We can now represent data and systematically optimize our models. But there’s one crucial element of the real world that we haven’t yet addressed: uncertainty.

The world is not a perfectly deterministic place. Data is noisy, measurements are imperfect, and outcomes are often a matter of chance. How can our mathematical models reason about a future that isn’t set in stone? The answer is the language of probability.

What is Probability? A Measure of Belief

At its core, probability is a way to quantify belief or uncertainty. It’s a number assigned to an event, ranging from 0 to 1, that represents how likely we think that event is to occur.

- Probability = 0: The event is impossible. (e.g., The probability of rolling a 7 on a standard six-sided die is 0).

- Probability = 1: The event is absolutely certain. (e.g., The probability that the sun will rise tomorrow is, for all practical purposes, 1).

- Probability = 0.5: The event is as likely to happen as it is not to happen. (e.g., The probability of a fair coin landing on heads is 0.5).

When an AI weather model predicts an “80% chance of rain,” it’s making a probabilistic statement. It’s using the language of probability to express its “degree of belief” in the outcome of “rain,” based on the input data it has seen. It’s not making an absolute claim; it’s providing an educated, quantified guess.

The Basic Rules of the Game

To work with these numbers, we need a few simple, foundational rules. Let’s consider a simple experiment, like rolling a single fair six-sided die. The set of all possible outcomes is {1, 2, 3, 4, 5, 6}.

Rule 1: The Probability of Any Single Outcome

The probability of any single event is the number of ways that event can happen divided by the total number of possible outcomes.

- The probability of rolling a 4, P(4), is 1/6.

- The probability of rolling a 1, P(1), is 1/6.

Rule 2: The Sum of All Probabilities is 1

If you add up the probabilities of all possible, mutually exclusive outcomes, the sum must be 1. (Mutually exclusive means only one of them can happen at a time).

- P(1) + P(2) + P(3) + P(4) + P(5) + P(6) = 1/6 + 1/6 + 1/6 + 1/6 + 1/6 + 1/6 = 1. This makes sense; it’s a certainty that one of these outcomes will occur.

Rule 3: The “OR” Rule (Addition)

The probability of event A OR event B happening is the sum of their individual probabilities (as long as they are mutually exclusive).

- What is the probability of rolling a 1 OR a 2?

P(1 or 2) = P(1) + P(2) = 1/6 + 1/6 = 2/6 = 1/3.

Why Probability is the Language of AI

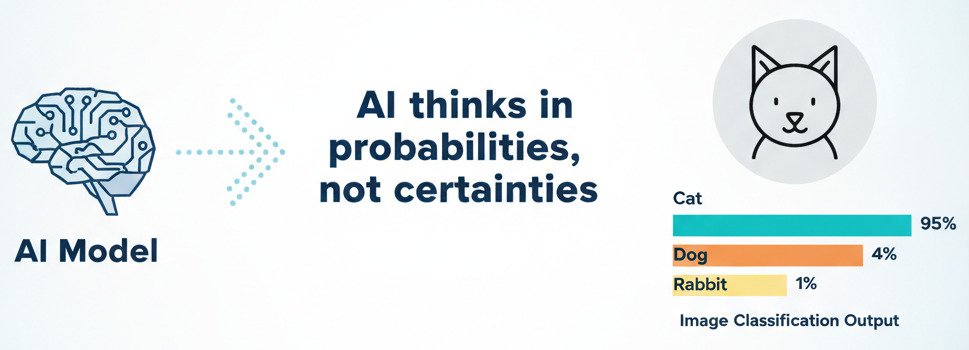

AI models are rarely 100% certain about anything. Their power lies in their ability to handle ambiguity and make the “best possible guess” based on incomplete or noisy data.

- Classification as Probability: When an AI model looks at an image and has to decide if it’s a “cat” or a “dog,” it usually doesn’t just output a single word. Instead, its final layer (often a “softmax” layer) outputs a probability distribution:

{ “Cat”: 0.95, “Dog”: 0.04, “Rabbit”: 0.01 }

The model is expressing its belief: “I am 95% confident this is a cat, but there’s a small 4% chance it could be a dog.” The final decision (“cat”) is simply the outcome with the highest probability. - Modeling Complex Systems: Probability allows us to build models of complex systems where we can’t possibly know every variable. In natural language processing, a model might predict the next word in a sentence by calculating the probability of every word in its vocabulary appearing next.

- Measuring Confidence: By outputting probabilities, a model can tell us not just what it thinks, but how sure it is. A prediction with 99% confidence is far more reliable than one with 51% confidence, and this is crucial information for making real-world decisions.

Probability provides the essential mathematical framework for our models to reason under uncertainty. It allows them to move beyond simple, deterministic f(x) = y relationships and into the nuanced, probabilistic world of “educated guesses.” It’s the bridge between the clean, abstract world of pure math and the messy, uncertain reality of the data we want to understand.