Series: The Sequentia Lectures: Unlocking the Math of AI

Part 2: The AI Toolkit: Linear Algebra

Lecture 11: Tensors: The Multi-Dimensional Grids that Power Deep Learning

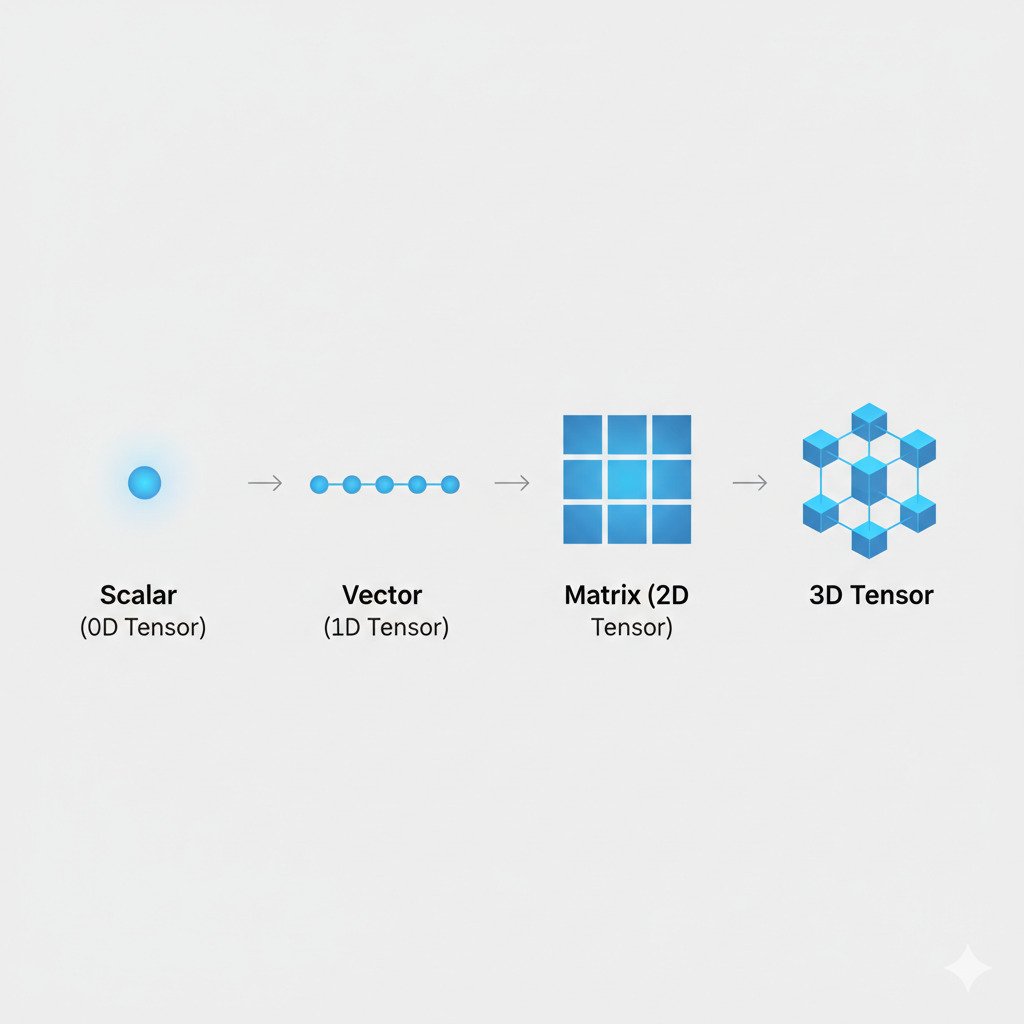

So far in our exploration of linear algebra, we’ve become familiar with two key players: vectors (1D lists of numbers) and matrices (2D grids of numbers). These tools are powerful, but to truly understand the language of modern deep learning—the technology behind everything from image recognition to advanced AI like ChatGPT—we need to meet their bigger sibling: the tensor.

If the term “tensor” sounds intimidating, don’t worry. The core concept is a natural and intuitive extension of what we already know.

From Lines and Grids to Multi-Dimensional Arrays

Let’s think about dimensions:

- A single number (like 5) is a 0-dimensional tensor, also called a scalar. It’s just a point.

- A list of numbers (like [5, 3, 2]) is a 1-dimensional tensor, which we know as a vector. It’s a line of numbers.

- A grid of numbers (a list of lists) is a 2-dimensional tensor, which we know as a matrix. It’s a flat rectangle of numbers.

What comes next? A 3-dimensional tensor is a “cube” of numbers—a list of matrices. A 4-dimensional tensor is a list of these cubes, and so on.

A tensor, in the context of AI, is simply a multi-dimensional grid (or array) of numbers. Vectors and matrices are just specific types of tensors (1D and 2D, respectively).

Why Do We Need Tensors? Data is Often Multi-Dimensional!

The reason tensors are so fundamental to deep learning is that the data we work with is often naturally multi-dimensional, and tensors are the perfect way to represent it without losing its inherent structure.

Example 1: The Color Image

In Lecture 2, we described a color image as three overlapping grids for Red, Green, and Blue (RGB). How do we represent this as a single object? With a 3D tensor!

A color image is a tensor with three dimensions:

- Dimension 1: Image Height (e.g., 256 pixels)

- Dimension 2: Image Width (e.g., 256 pixels)

- Dimension 3: Color Channels (3 channels: R, G, B)

So, a 256×256 color image is a tensor of shape (256, 256, 3). It’s a “cube” of numbers where each slice of the cube is one of the color grids. This structure is far more natural than trying to flatten the entire image into one giant, one-dimensional vector, which would lose the spatial relationships between pixels.

Example 2: A Video Clip

A video is just a sequence of images (frames). How do we represent a 5-second video clip? With a 4D tensor!

A short color video is a tensor with four dimensions:

- Dimension 1: Number of Frames (e.g., 120 frames for a 5-second video at 24fps)

- Dimension 2: Frame Height (e.g., 480 pixels)

- Dimension 3: Frame Width (e.g., 640 pixels)

- Dimension 4: Color Channels (3 channels: R, G, B)

The shape of this tensor would be (120, 480, 640, 3). It’s a list of the 3D “cubes” we used for still images.

Example 3: Batches of Data

When training an AI, we rarely process one piece of data at a time. For efficiency, we feed the model a “batch” of data. If we want to process 32 color images at once, our data is represented by a 4D tensor: (32, 256, 256, 3), where the first dimension is the batch size.

The Language of Deep Learning

When you hear about deep learning frameworks like TensorFlow or PyTorch, the name is no coincidence. These entire libraries are built around the creation and manipulation of tensors. All the operations we’ve discussed—dot products, matrix multiplications—are generalized to work on these multi-dimensional tensors.

A neural network, therefore, isn’t just a series of matrix transformations; it’s a series of tensor operations. It takes an input tensor, applies a transformation, gets a new tensor, applies another transformation, and so on, until it arrives at the final output tensor.

By using tensors, deep learning models can efficiently process incredibly complex, high-dimensional data while preserving its essential structure (like the spatial layout of an image or the temporal sequence of a video).

Understanding tensors means you’re no longer just looking at the basic tools of AI; you’re speaking the native language of modern deep learning. This concludes our introduction to the core mathematical objects of AI. We are now fully equipped to see how they are put to use!