Series: The Sequentia Lectures: Unlocking the Math of AI

Part 2: The AI Toolkit: Linear Algebra

Lecture 9: Matrix Multiplication: A Symphony of Transformations

If you’ve ever encountered matrix multiplication in a math class, you might remember it as a tedious, rule-heavy arithmetic task. But in the world of AI, it’s far more than that. Matrix multiplication is a symphony. It’s the single, powerful operation that allows an AI to reshape its entire understanding of the data landscape, applying complex transformations all at once.

This is the core computational step inside every layer of a neural network, and today, we’re going to demystify it.

The “How”: Rows meet Columns

Let’s first quickly cover the mechanics. To multiply two matrices, A and B, to get a new matrix C, there’s one crucial rule: the number of columns in the first matrix (A) must equal the number of rows in the second matrix (B).

The value of each element in the resulting matrix C is found by taking the dot product of a row from matrix A and a column from matrix B.

- To get the element in the 1st row, 1st column of C, we calculate the dot product of the 1st row of A and the 1st column of B.

- To get the element in the 1st row, 2nd column of C, we calculate the dot product of the 1st row of A and the 2nd column of B.

…and so on, for every element.

Yes, it’s a lot of dot products! But why go through all this trouble? Because of what it means.

The “Why”: Composing Transformations

Remember from Lecture 7 that a matrix can represent an action or a transformation (like a rotation or a scaling). What happens when you multiply two transformation matrices together?

You compose their actions into a single, new transformation.

Imagine you want to first rotate a vector by 45 degrees, and then you want to scale it to be twice as long. You could do this in two steps:

- Multiply the vector by the “Rotation Matrix.”

- Multiply the resulting new vector by the “Scaling Matrix.”

Or, you could first multiply the Rotation Matrix by the Scaling Matrix. This gives you a single, brand-new “RotateAndScale Matrix.” Now, you can apply this one matrix to your vector to achieve both effects in a single operation.

Matrix multiplication is like combining two separate instructions (“turn right,” “walk forward 10 steps”) into a single, more complex instruction (“move diagonally to the northeast corner”).

Reshaping the Entire Landscape at Once

Now, let’s bring back our data. Remember that we can store our entire dataset (e.g., thousands of user profiles or images) in a single large data matrix, where each row is a vector.

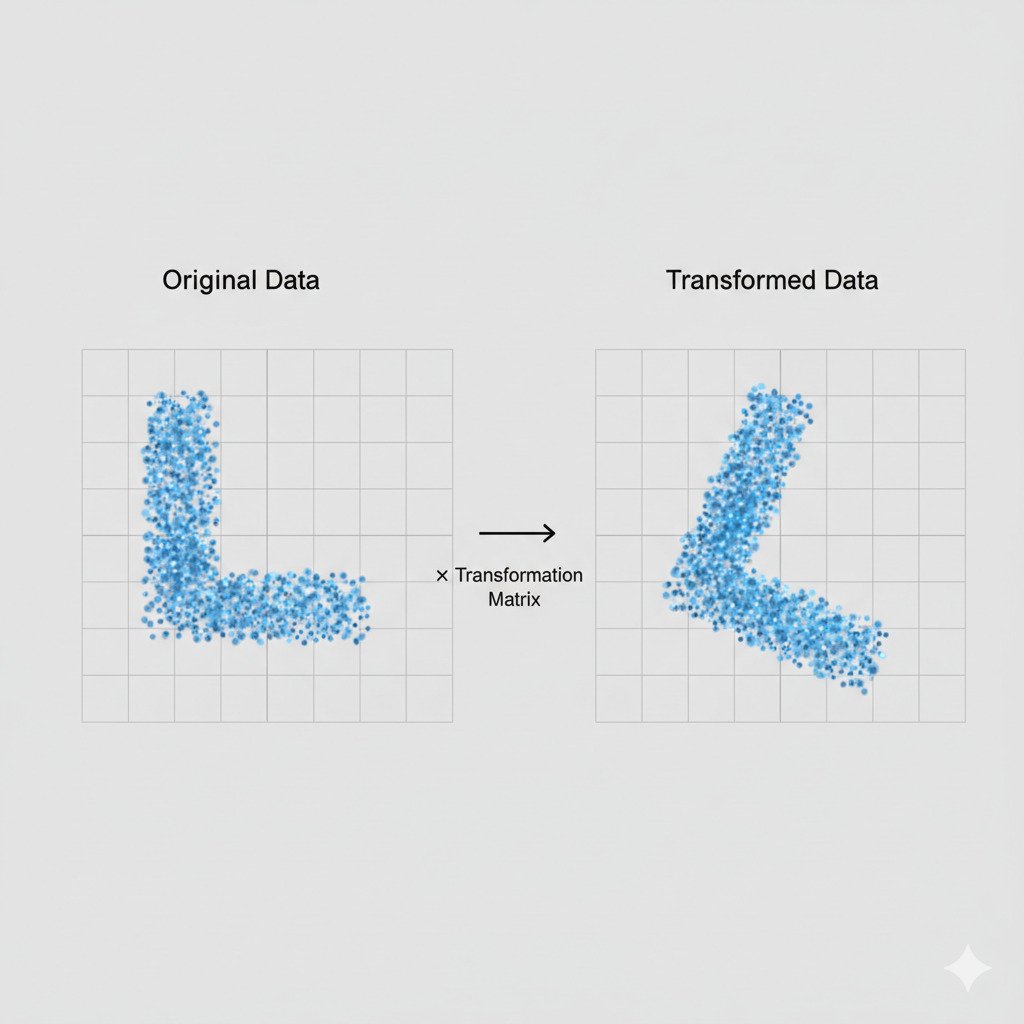

When we multiply our Data Matrix by a Transformation Matrix, we are performing a symphony of dot products. Each row-vector of our data is being transformed by the action matrix.

This means with a single matrix multiplication, we can:

- Rotate our entire cloud of data points.

- Stretch the landscape along certain axes, pulling similar points closer together and pushing different ones further apart.

- Project the data from a high-dimensional space into a lower-dimensional one to make it easier to visualize and classify.

This is the heart of a neural network. An input (like an image vector) is fed into the network.

- Layer 1’s matrix multiplication transforms the data.

- Layer 2’s matrix multiplication transforms it again.

- Layer 3’s matrix multiplication transforms it yet again.

Each layer is a matrix that has been “learned” (through a process called training) to perform a very specific transformation. The goal is that after this sequence of transformations, the originally messy data landscape will be reshaped so that all the “cat” points are neatly clustered in one corner, and all the “dog” points are in another, making them easy to separate with a simple boundary.

Matrix multiplication is not just arithmetic; it’s the engine of transformation that allows a neural network to take a chaotic input and sculpt it, layer by layer, into a beautifully organized and understandable structure.

In our next lecture, we’ll finally put all these pieces together and build our first, simplest AI model from scratch: Linear Regression.